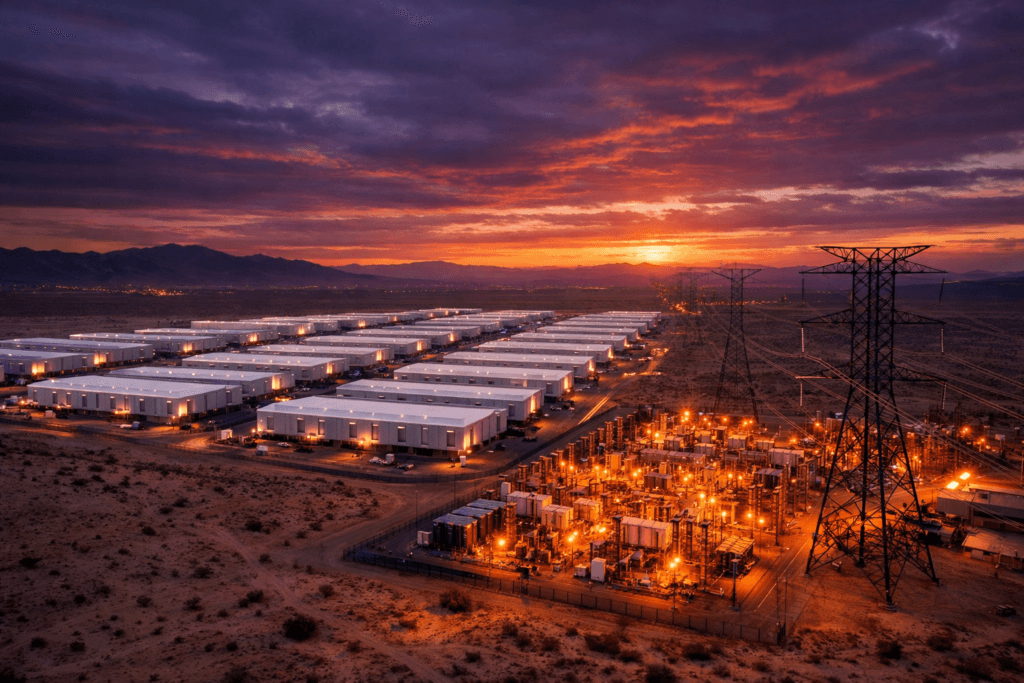

The Core Story: What Did Google Agree To? Google has committed to a “demand response” program covering 350 megawatts of capacity as part of a massive 2.7-gigawatt power procurement deal, according to TechCrunch . Under the arrangement, Google will reduce or suspend operations at certain data center facilities during periods of peak grid demand, effectively volunteering to go dark so that homes, hospitals, and other critical infrastructure can keep their lights on. The deal, negotiated with regional utility providers, is designed to address the central tension in AI infrastructure: the industry needs vastly more electricity than the current grid can reliably supply, but utilities and regulators are reluctant to approve new connections without guarantees that Big Tech won’t destabilize the power system for everyone else. Google’s 2.7 GW total commitment is staggering in context. For comparison, the entire city of San Francisco consumes approximately 1 GW of power at peak. Google is effectively securing enough electricity to power nearly three San Franciscos and still agrees to give some of it back when the grid needs help. Context & Global Impact: The AI Power Crisis Is Real Google’s concession is not an isolated corporate decision. It is a symptom of a structural crisis that is reshaping energy policy, utility regulation, and infrastructure investment across the developed world. AI data centers now consume more electricity than some countries. The International Energy Agency estimated in late 2025 that global data center electricity consumption would reach 1,000 terawatt-hours by 2026, roughly equivalent to the entire electricity consumption of Japan. AI training and inference workloads are the primary driver, with a single large language model training run consuming as much electricity as 100 American homes use in a year. Utilities are rejecting new connections. In Virginia’s “Data Center Alley,” home to the world’s largest concentration of data centers, Dominion Energy has a queue of over 30 gigawatts of pending connection requests. The utility has begun rejecting or delaying applications because the grid cannot physically support the demand. Similar backlogs exist in Ireland, the Netherlands, and Singapore. The “demand response” model is a paradigm shift. Historically, demand response programs were designed for industrial manufacturers, steel mills, and aluminum smelters that could flex their consumption. The fact that a technology company is now participating in the same programs underscores how much data centers have come to resemble heavy industry in their energy profiles. Nuclear power is back on the table. Google, Microsoft, and Amazon have all announced investments in nuclear energy, including small modular reactors (SMRs) and even the restart of decommissioned plants, as a long-term solution. But those facilities are years from being operational, leaving demand response as the only near-term tool available. The Hidden Cost to AI Performance There is a technical consequence to Google’s agreement that most coverage has overlooked. When Google reduces data center operations during peak grid demand, something has to give. That “something” is likely to be non-critical AI inference workloads, the processes that power features like search summaries, Google Assistant responses, and advertising optimization. During demand response events, users may experience slower response times, reduced AI feature availability, or degraded performance without ever knowing why. This represents a subtle but significant shift: for the first time, the quality of AI services is being directly constrained by the physical limits of the electrical grid. What This Means for Your Electric Bill Google’s massive power procurement does not come free. When utilities approve multi-gigawatt connections for data centers, the infrastructure costs, new transmission lines, substation upgrades, and grid reinforcement are typically socialized across all ratepayers. Consumer advocacy groups have begun raising concerns that residential electricity bills could increase by 5–15% in regions with heavy data center concentration, effectively forcing households to subsidize the AI boom. What’s Next: The Race for Watts Google’s deal is just one piece of a global scramble. Microsoft is pursuing a 5 GW nuclear partnership. Amazon has committed over $10 billion to data center power infrastructure. Meta’s $27 billion Nebius deal (covered earlier by BreezyScroll) includes a 1.2-gigawatt AI factory in Missouri. The question is no longer whether AI companies can build enough chips or train large enough models. It is whether the physical world can generate enough electricity to power the digital one. Google’s willingness to turn off its own data centers is the clearest signal yet that, for now, the answer is no. Frequently Asked Questions Why is Google agreeing to shut down data centers? To secure approval for a 2.7-gigawatt power deal. Utilities require “demand response” commitments, agreements to reduce consumption during peak grid stress, before approving connections of this scale. How much power does AI consume? The IEA estimates global data center electricity consumption will reach 1,000 terawatt-hours by 2026, equivalent to Japan’s total electricity consumption. AI training is the primary driver of growth. Could this affect Google’s AI services? Potentially. During demand response events, non-critical AI workloads may be deprioritized, which could cause slower response times or reduced feature availability for users. Tags: AI Featured Google

Google Agrees to 'Demand Response' Program to Secure Power Deal

Breezy Scroll•

Full News

Share:

Disclaimer: This content has not been generated, created or edited by Achira News.

Publisher: Breezy Scroll

Want to join the conversation?

Download our mobile app to comment, share your thoughts, and interact with other readers.